XR in ...

Education and Training

Can you explain this with XR?

Learn more about how XR is used in education and training!

Co-Working and Creativity

Can I meet you in XR?

Learn more about how XR is going to change the way we work and create together!

Therapy and

Health

Getting better with XR?

Learn more about how XR technologies can help in therapy and health related fields!

AI and Machine Learning

How can AI benefit from XR technology?

Learn more about how AI can be developed and improved with the help of XR!

Fundamental Research and Knowledge Transfer

How do we know all of this about XR?

Learn more about the fundamental research that is the basis for all XR applications!

News

The Winter EXPO 2026 was a great success! With different demos and projects.

06 Feb 2026

More

We invite you to this year's Winter EXPO on the 6th of February!

21 Jan 2026

More

The Summer EXPO 2025 for HCI/HCS, CS and GE was a great success! A large number of visitors were able to experience up to 120 different demos and projects.

25 Jul 2025

More

We invite you to this year's summer EXPO on the 25th of July!

07 Jul 2025

More

A Hands-On Dive into XR Development with Full-Body Avatars

16 May 2025

More

Girls' Day 2025 was a great success with almost 80 participants this year

03 Apr 2025

More

The HCI and PIIS group presented 11 publications at IEEE VR, receiving a Best Workshop Paper Award, an Honorable Mention Best Paper Award, and a Best Poster Award. Some of the projects were partially funded by the XR Hub.

24 Mar 2025

More

Presentation of Minister Dr. Fabian Mehrings avatar during bits and pretzels

10 Oct 2024

More

The Summer EXPO 2024 for HCI/HCS, CS and GE was a great success! A large number of visitors were able to experience up to 120 different demos and projects.

19 Jul 2024

More

On July 04th, 2024, the XR Hub Würzburg will be present at the XR Day in Nuremberg, showcasing as an exhibitor from 14:30 onwards. Join us to witness the latest XR advancements and immerse yourself in the future of technology!

04 Jul 2024

Go to website

The XR Hub participated in the XR Day at July, 4th 2024 at the IHK building in Nuremberg.

04 Jul 2024

More

This year's summer expo is on the 19th of July 2024. Feel free to visit and experience a lot of interesting projects.

18 Jun 2024

More

We presented the HiAvA project at the Transforming Media event

10 Jun 2024

More

The Girls' Day took place on April 25th, 2024, and was a great success! Together with the XR Hum Nuremberg we conducted parallel workshops where the girls got familiar with XR technologies and learned about the background of designing XR experiences.

25 Apr 2024

More

The XR Hub Würzburg and HiAvA attended the XR Expo in Stuttgart, where they made valuable connections with attendees from academia and industry.

04 Apr 2024

More

HCI, PIIS and PsyErgo their works at the IEEE VR 24 Conference in Orlando, Florida

21 Mar 2024

More

Bavaria's new Minister of Digital Affairs Dr. Fabian Mehring visited the University of Würzburg for the first time. He was visibly impressed by the projects and achievements of the XR Hub.

11 Mar 2024

More

In a recent appearance on the podcast 'Arbeit, Bildung, Zukunft,' Prof. Dr. Marc Erich Latoschik delved into the metaverse and its intersection with artificial intelligence. For a comprehensive exploration of these topics, tune in to the podcast on the 'Arbeit, Bildung, Zukunft' website.

25 Jan 2024

Go to website

Save-the-date: The winter Expo will take place on the 9th February!

11 Jan 2024

More

On November 14th, 2023, the XR Hub presented at this online event.

08 Jan 2024

More

The XR Hub participated in WueWW on Friday, November 17th, 2023, offering insights into the field of extended reality and showcasing its diverse applications.

20 Nov 2023

More

The XR-Hub Würzburg will join the Wuerzburg Web Week 2022 on 17th November! Make sure to be there to listen to our talk and try out our demonstrations."

03 Nov 2023

Go to website

On November 14th, 2023 the XR Hub will be presenting at this online event

25 Oct 2023

More

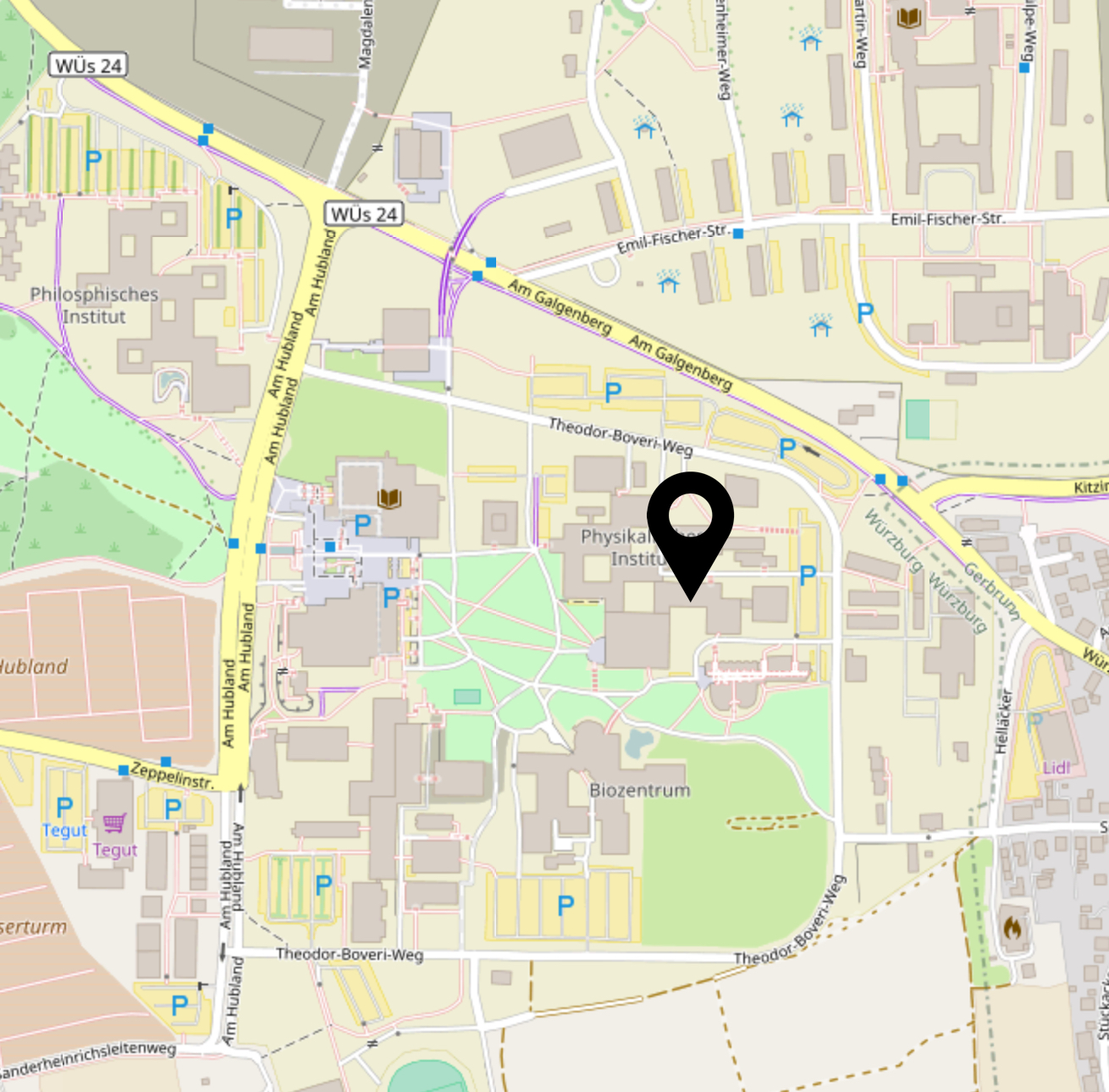

We can now be found in the Emil-Fischer-Straße 50 in Hubland North.

13 Sep 2023

More

The Summer EXPO 2023 for MCS/HCI, MK and GE was a great success! A large number of visitors were able to experience up to 120 different demos and projects.

24 Jul 2023

More

Are you interested in Games, Virtual Reality, Human-Computer Interaction, and Science? Then this will be the right event for you!

18 Jul 2023

More

On July 6th, the XR Hub visited the XR Day in Nuremberg in order to showcase the demo 'Virtually Me' and to exchange ideas with XR experts from different fields.

12 Jul 2023

More

On July 6th, 2023, the XR Hub Würzburg will be present at the XR Day in Nuremberg, showcasing as an exhibitor from 16:45 onwards. Join us to witness the latest XR advancements and immerse yourself in the future of technology!

28 Jun 2023

Go to website

On June 16th at 1pm the XR Hub Würzburg hosts the event 'Dive into XR' for the Digitaltag 2023. Among other things, visitors will have the opportunity to experience different tracking solutions in the newly established motion capture lab.

24 May 2023

Go to website

As part of the project 'XR versus soil erosion', the XR-Hub visited the Bayerische Digitalgipfel in Munich.

23 May 2023

More

The Girls' Day took place on April 27th, 2023, and was a great success!Together with the XR Hum Nuremberg we conducted parallel workshops where the girls got familiar with XR technologies and learned about the background of designing XR experiences.

10 May 2023

More

Wuertual Reality, a premiere conference in the field of XR was held last week right here in Würzburg.

17 Apr 2023

More

The 30th IEEE Conference on Virtual Reality and 3D User Interfaces (IEEEVR 2023) was a great success.

13 Apr 2023

More

From April 11th to 13th, the Würtual Reality XR Meeting 2023 will take place at the University of Würzburg, Germany. The HCI Chair offers various demonstrations as well as talks on current research issues for the participants.

07 Mar 2023

More

We are very pleased to present our research at this year's IEEE VR.

02 Mar 2023

More

On the 23rd and 24th of February we visited the AI.BAY 2023 in Munich on order to support the CAIDAS and to showcase two of our current projects together with the Center for Artificial Intelligence and Robotics (CAIRO).

27 Feb 2023

More

The Free State of Bavaria supports the XR Hub Würzburg with 730,000 € for another two years. Digital Minister Judith Gerlach handed over the funding certificate to the XR Hub Würzburg.

17 Feb 2023

More

The Winter EXPO 2023 for MCS and HCI was a great success! We thank all the contributors and guests for participating.

14 Feb 2023

More

Save-the-date: The winter Expo will take place on the 10th February!

09 Jan 2023

More

Together with the XR HUB Nuremberg and Labs Network Industrie 4.0 e.V. we organized the online event "XR MEETS FUTURE WORK".

22 Nov 2022

More

Carolin Wienrich, Marc Latoschik, and Andreas Hotho visited the Bavarian Digital Summit.

21 Nov 2022

More

On November 15th 2022, starting at 14:00, the event "XR meets future work" will take place via zoom.

07 Nov 2022

More

We are very honored that we got the chance to present our IEEE VR Journal Paper „Breaking Plausibility Without Breaking Presence - Evidence For The Multi-Layer Nature Of Plausibility“ as one of 12 invited high-impact papers at the IEEE VIS.

06 Nov 2022

More

The XR Hub hosted an event for the WueWW on Wednesday, the 26nd of October 2021 to give an insight into the world of extended reality and to showcase potentials and fields of application of XR.

06 Nov 2022

More

The XR-Hub Würzburg is happy to report 2 sucessful days at the XR Week in Stuttgart .

20 Sep 2022

More

The XR-Hub Würzburg will join the Wuerzburg Web Week 2022 on 26th October! Make sure to be there to listen to our talk and try out our demonstrations.

20 Sep 2022

Go to website

On September 29, 2022, Prof. Latoschik will give a talk on Megatrend XR and the Metaverse at the kick-off Event for the 4th Year of BayFiD.

19 Sep 2022

More

On October 19, 2022, Prof. Latoschik and Prof. Wienrich will speak at "Würzbürger Impulse: Künstliche Intelligenz und Robotik in der Medizin"

19 Sep 2022

More

On September 15th and 16th the XR-Hub Würzburg will be part of the XR Week in Stuttgart.

13 Sep 2022

More

The Summer EXPO 2022 for MCS/HCI, MK and GE was a great success! We thank all the contributors and guests for participating.

29 Jul 2022

More

The XR-Hub Würzburg is pleased to report a sucessful day at the XR Day in Nuremberg 7th of July.

07 Jul 2022

More

Summer Expo 2022 will take place on 29th of July 2022.

15 Jun 2022

Go to website

On July 7, 2022, the XR Hub Würzburg will attend the XR Day in Nuremberg.

14 Jun 2022

More

On May 24th, the XR Hub went on an excursion in order to be part of the "Media meets Health" event in Munich.

13 Jun 2022

More

The XR-Hub was part of an extended reality event organized by the Bavarian Business Association on May 23rd.

13 Jun 2022

More

The MEDIA meets HEALTH event in Munich will take place on May 24th. The ViTraS project will be showcased and the XR Hub will be present.

16 May 2022

More

The XR Hub hosted an event for the WueWW on Friday, the 22nd of October 2021 to give an insight into the world of extended reality and to showcase fields of application of XR.

22 Oct 2021

More

Save-the-date: The 2nd Demo Day of the Bavarian Innovation Labs will take place as an online event on Tuesday, October 12, 2021.

08 Oct 2021

More

The XR Hub Würzburg is thrilled to announce that we will participate in the WueWW! Join our talk and make sure to check out all of the other incredible events.

04 Oct 2021

Go to website

The Places_ VR Festival took place from 16th to 18th of September in Gelsenkirchen. The master thesis 'TooCloseVR', which is affiliated with the XR Hub Würzburg, was nominated for the Best Impact category of the DIVR Science Award.

16 Sep 2021

More

On September 10th the XR Hub was invited for a talk about the possibilities and advantages of XR technologies for innovations.

10 Sep 2021

Go to website

The Human-Computer, Games and Informatics Expo will take place on 16th of July 2021.

15 Jun 2021

Go to website

Check out the new video on networking in the XR community & how to help implement new ideas.

28 May 2021

Go to website

Together with Bavarian Minister of State Judith Gerlach the XR Hub gave a masterclass called "Dive into XR – use cases and outlook" at Bits&Pretezls 2020.

02 Oct 2020

More

The XR Hub is excited to announce that we will give a masterclass called "XR – Key to your Success" at the Bits&Pretzels 2020. Join us on Friday, October 2nd, at 3:40 pm when avatars explain extended reality!

27 Sep 2020

Go to website

Judith Gerlach, the Bavarian Minister of State for Digital Affairs, visited the HCI and HTS chair on Tuesday, 4th August.

04 Aug 2020

More

A Center for Artificial Intelligence in Data Science (CAIDAS) is being established at the University of Würzburg. Minister of State Dorothee Bär has informed herself about its research.

30 Jul 2020

More

On the 21st of July representatives of XR affiliated companies met for the 1st Industrial Think Tank organized by the XR Hub Würzburg.

21 Jul 2020

More

The project ViLeArn was presented at the Digital Day Bavaria 2020. As part of the XR-Hub slot, the current prototype of project ViLeArn was streamed via Zoom.

19 Jun 2020

More

The potential of virtual and augmented reality in schools is great, and this has been scientifically proven. But how do you get teachers to actually use XR? Sebastian Oberdörfer talks about it in the 7th episode of the XR podcast.

14 May 2020

Go to website

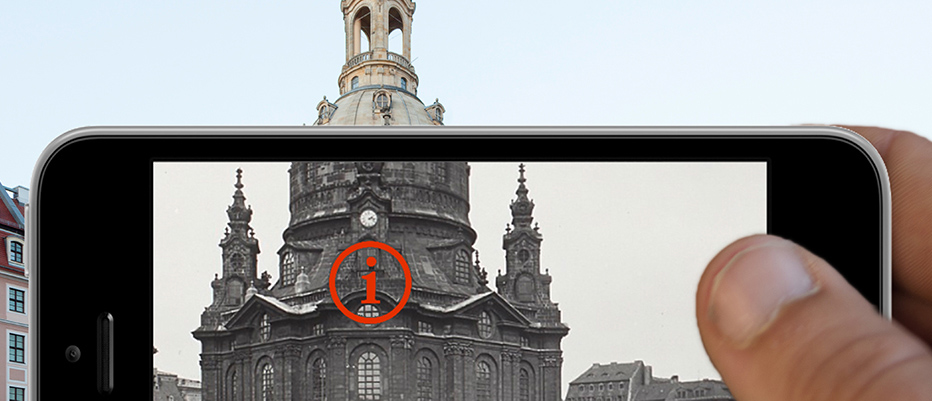

This DECH'17 workshop paper explores methods to work with digital image libraries, from the creation of 3D or in extension time-annotated 4D models, to the eventual dissemination of research findings in teaching/learning scenarios. Pedagogical approaches for learning objectives and methods for including Augmented Reality in mobile learning environments are reviewed.

02 Mar 2020

More

This TVCG'18 best journal paper investigates how personalized and individualized avatars affect body ownership, presence, and emotional response. Several significant as well as a number of notable effects were found, most notably that personalized avatars significantly increase body ownership.

02 Mar 2020

More

This TVCG'19 journal paper investigates performance and user experience of Social Virtual Reality (SVR) targeting distributed, embodied, and immersive, face-to-face encounters. As one result, a user study reveals positive effects of an increasing number of co-located social companions on the quality of experience of virtual worlds.

02 Mar 2020

More

This ICMI'19 *Best Paper Runner Up* article presents a speech and gesture-based interaction paradigm for creative design tasks in VR. The multimodal approach resulted in several advantages, including improved perceived usability, an increased sense of flow and a higher sense of presence.

02 Mar 2020

More

On the 7th of February, academic representatives of Bavarian research groups gathered to conduct the first Think Tank Academic Research in XR.

07 Feb 2020

More

On January 24th, the XR Hub Würzburg and XR Hub Munich Team made an excursion to our colleagues at the FH Upper Austria, Hagenberg Campus to visit their second Mixed Reality Day, organized by Prof. Christoph Anthes.

24 Jan 2020

More

Virtual reality, extended reality, human-computer interaction in education and training - these are the topics of Prof. Marc Latoschik's lecture on 24.01.2020 at 13:00 in the Aquarium of FOS/BOS Kitzingen.

23 Jan 2020

More

The Bavarian Ministry of Digital Affairs is creating a competence centers for key technologies in the field of eXtended Reality, called 'XR Hubs', that will network small and medium-sized companies in Bavaria and support them in technology transfer. In addition to a central hub in Munich, 500,000 € per year are invested in regional hubs. The XR Hub Lower Franconia will cooperate with the HCI chair Würzburg.

27 Sep 2019

Go to website

Judith Gerlach, the Bavarian Minister of State for Digital Affairs, visited the chair HCI on Monday, 29th April.

24 Apr 2019

More

Recent Publications

Murat Yalcin, Marc Erich Latoschik,

End-to-End Non-Invasive ECG Signal Generation from PPG Signal: A Self-Supervised Learning Approach, In Frontiers in Physiology.

2026. To be published

[BibTeX] [Abstract] [Download] [BibSonomy]

[BibTeX] [Abstract] [Download] [BibSonomy]

@article{yalcin2026endtoend,

author = {Murat Yalcin and Marc Erich Latoschik},

journal = {Frontiers in Physiology},

url = {https://www.frontiersin.org/journals/physiology/articles/10.3389/fphys.2026.1694995/abstract},

year = {2026},

title = {End-to-End Non-Invasive ECG Signal Generation from PPG Signal: A Self-Supervised Learning Approach}

}

Abstract:

Electrocardiogram (ECG) signals are frequently utilized for detecting important cardiac events, such as variations in ECG intervals, as well as for monitoring essential physiological metrics, including heart rate (HR) and heart rate variability (HRV). However, the accurate measurement of ECG traditionally requires a clinical environment, thereby limiting its feasibility for continuous, everyday monitoring. In contrast, Photoplethysmography (PPG) offers a non-invasive, cost-effective optical method for capturing cardiac data in daily settings and is increasingly utilized in various clinical and commercial wearable devices. However, PPG measurements are significantly less detailed than those of ECG. In this study, we propose a novel approach to synthesize ECG signals from PPG signals, facilitating the generation of robust ECG waveforms using a simple, unobtrusive wearable setup. Our approach utilizes a Transformer-based Generative Adversarial Network model, designed to accurately capture ECG signal patterns and enhance generalization capabilities. Additionally, we incorporate self-supervised learning techniques to enable the model to learn diverse ECG patterns through specific tasks. Model performance is evaluated using various metrics, including heart rate calculation and root minimum squared error (RMSE) on two different datasets. The comprehensive performance analysis demonstrates that our model exhibits superior efficacy in generating accurate ECG signals (with reducing 83.9\% and 72.4\% of the heart rate calculation error on MIMIC III and Who is Alyx? datasets, respectively), suggesting its potential application in the healthcare domain to enhance heart rate prediction and overall cardiac monitoring. As an empirical proof of concept, we also present an Atrial Fibrillation (AF) detection task, showcasing the practical utility of the generated ECG signals for cardiac diagnostic applications. To encourage replicability and reuse in future ECG generation studies, we have shared the dataset and will also make the code as publicly available.

Marie Luisa Fiedler, Christian Merz, Lukas Schach, Jonathan Tschanter, Mario Botsch, Carolin Wienrich, Marc Erich Latoschik,

Am I Still Me? Visual Congruence Across Reality–Virtuality and Avatar Appearance in Shaping Self-Perception and Behavior, In IEEE Transactions on Visualization and Computer Graphics.

2026. To be published.

[BibTeX] [Abstract] [BibSonomy]

[BibTeX] [Abstract] [BibSonomy]

@article{fiedler2026still,

author = {Marie Luisa Fiedler and Christian Merz and Lukas Schach and Jonathan Tschanter and Mario Botsch and Carolin Wienrich and Marc Erich Latoschik},

journal = {IEEE Transactions on Visualization and Computer Graphics},

year = {2026},

title = {Am I Still Me? Visual Congruence Across Reality–Virtuality and Avatar Appearance in Shaping Self-Perception and Behavior}

}

Abstract:

This paper presents the first systematic investigation of how congruence in visual self-representation influences self-perception and behavior. We span a continuum from the physical self through avatars with graded self-similarity to clearly dissimilar avatars in virtual reality (VR). In a 1x4 within-user study, participants completed movement and quiz tasks in either physical reality or a digital twin environment in VR, where they embodied one of three avatars: a photorealistic self-similar avatar, a dissimilar same-gender avatar, or a dissimilar opposite-gender avatar. Subjective measures included presence, sense of embodiment, self-identification, and perceived change, and were complemented by an objective movement metric of behavioral change. Compared to physical reality, VR, even with a self-similar avatar, produced lower presence, a weaker sense of embodiment, and reduced self-identification, revealing a persistent gap in visual congruence. Within VR, self-similar avatars enhanced body ownership, self-location, and self-identification relative to dissimilar avatars. Conversely, dissimilar avatars produced measurable behavioral changes compared with self-similar ones. Gender cues, however, had little impact in gender-neutral tasks. Overall, the findings show that photorealistic self-similar avatars reinforce embodiment and self-identification. However, VR still falls short of achieving congruence with physical reality, underscoring key challenges for avatar realism and ecological validity.

Jonathan Tschanter, Christian Merz, Marie Luisa Fiedler, Carolin Wienrich, Marc Erich Latoschik,

Use Case Matters: Comparing the User Experience and Task Performance Across Tasks for Embodied Interaction in VR, In IEEE Transactions on Visualization and Computer Graphics.

2026. To be published

[BibTeX] [Abstract] [BibSonomy]

[BibTeX] [Abstract] [BibSonomy]

@article{tschanter2026matters,

author = {Jonathan Tschanter and Christian Merz and Marie Luisa Fiedler and Carolin Wienrich and Marc Erich Latoschik},

journal = {IEEE Transactions on Visualization and Computer Graphics},

year = {2026},

title = {Use Case Matters: Comparing the User Experience and Task Performance Across Tasks for Embodied Interaction in VR}

}

Abstract:

Integrated Virtual Reality (IVR) systems are central to avatar-mediated use cases in Virtual Reality (VR), reconstructing users' movements on avatars. They differ primarily in their tracking architectures, which determine how completely and accurately users' movements are captured and reconstructed on avatars.

Many current IVR systems reduce user-worn hardware, trading reconstruction accuracy against cost and setup complexity, yet their impact on user experience and task performance across use cases remains underexplored. We compared three reduced user-worn IVR systems. Each system has distinct technical approaches: (1) Captury (markerless outside-in optical tracking), (2) Meta Movement SDK (markerless inside-out optical tracking), and (3) Vive Trackers (marker-based outside-in optical tracking with IMUs).

In a 3x5 mixed-design, participants performed five tasks, simulating different use cases, to probe distinct aspects of these systems. No system consistently outperformed the others. Meta excelled in hand-based, fast-paced interactions, while Captury and Vive performed better in lower-body tasks and during full-body pose observation. These findings underscore the need to evaluate reduced user-worn IVR systems within the specific use case. We offer practical guidance for system selection based on use-case demands and released our tasks as an open-source, extensible framework to support future evaluations for selecting IVR systems.

Jonathan Tschanter, Christian Merz, Carolin Wienrich, Marc Erich Latoschik,

How Harassment Shapes Self-Perception and Well-Being in Social VR: Evidence from a Controlled Lab Study, In IEEE Transactions on Visualization and Computer Graphics.

2026. To be published

[BibTeX] [Abstract] [BibSonomy]

[BibTeX] [Abstract] [BibSonomy]

@article{tschanter2026harassment,

author = {Jonathan Tschanter and Christian Merz and Carolin Wienrich and Marc Erich Latoschik},

journal = {IEEE Transactions on Visualization and Computer Graphics},

year = {2026},

title = {How Harassment Shapes Self-Perception and Well-Being in Social VR: Evidence from a Controlled Lab Study}

}

Abstract:

Social Virtual Reality (SVR) allows users to meet and build relationships through embodied avatars and real-time interaction in virtual spaces. While embodiment can strengthen social connections and presence, it can also intensify negative encounters, making SVR particularly vulnerable to harassment. Despite frequent reports of verbal, visual, and "physical" violations in SVR, little is known about how harassment reshapes users' self-perception, including their sense of embodiment, self-identification, closeness, and avatar customization preferences. We conducted a controlled experiment with 52 participants who experienced either a neutral or a harassment condition in a scenario modeled after real SVR incidents. Participants perceived the harassing peer as significantly more negative, annoying, and disturbing than the neutral peer. Contrary to prior reports, harassment did not significantly affect well-being measures, including emotional state, self-esteem, and physiological arousal, within this controlled scenario. However, participants reported stronger bodily change, attributed more of their own attitudes and emotions to their avatars, and increased interpersonal distance when personal space was invaded. Self-reported coping strategies included ignoring, stepping back, using humor, and retaliating. Notably, avatar customization preferences shifted across conditions. Participants in the neutral condition favored personalized avatars, whereas those in the harassment condition more frequently preferred anonymity in public spaces. Together, these findings demonstrate that harassment in SVR not only exploits embodiment but also reshapes self-perception. We further contribute methodological insights into how harassment can be ethically and reproducibly studied in controlled SVR-like experiments.

David Obremski, Paula Friedrich, Carolin Wienrich,

To be Healed or Hacked? - User‑Centered Ethical Design for Embodied AI in Mental Health Care, In IEEE Transactions on Visualization and Computer Graphics.

2026. To be published

[BibTeX] [Abstract] [BibSonomy]

[BibTeX] [Abstract] [BibSonomy]

@article{obremski2026healed,

author = {David Obremski and Paula Friedrich and Carolin Wienrich},

journal = {IEEE Transactions on Visualization and Computer Graphics},

year = {2026},

title = {To be Healed or Hacked? - User‑Centered Ethical Design for Embodied AI in Mental Health Care}

}

Abstract:

The global prevalence of mental health disorders has created a substantial treatment gap. To support clinicians and increase access to care, researchers in the field of Artificial Intelligence (AI) and Virtual Reality (VR) have investigated technology-mediated psychotherapy for years.

However, research about stakeholders' concerns and their readiness to use AI in psychotherapy remains scarce. This study focuses on a user-centered approach to accommodate patients' concerns and, based on the results, implement measures to foster self-disclosure and trust towards an embodied AI therapist in VR.

First, we conducted an online study with mental health patients ($N = 152$), which identified data autonomy and transparency as their primary ethical concerns. In a subsequent in-person VR study ($N = 90$) we compared effects of increased data autonomy and transparency on self-disclosure and trust towards an embodied AI therapist.

Results indicated that higher data autonomy led to greater self-disclosure, while transparency had no significant effect. Manipulating data autonomy and transparency did not affect perceived trust, though exploratory calculations revealed that women reported significantly higher trust levels than men. These findings illuminate patients' priorities and provide implications for technical designs for AI-driven mental health care.

Florian Kern, Lukas Polifke, Paula Friedrich, Marc Erich Latoschik, Carolin Wienrich, David Obremski,

CECA - A Configurable Framework for Embodied Conversational AI Agents in Extended Reality, In 2026 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW).

2026. To be published

[BibTeX] [Abstract] [BibSonomy]

[BibTeX] [Abstract] [BibSonomy]

@inproceedings{kern2026configurable,

author = {Florian Kern and Lukas Polifke and Paula Friedrich and Marc Erich Latoschik and Carolin Wienrich and David Obremski},

year = {2026},

booktitle = {2026 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW)},

title = {CECA - A Configurable Framework for Embodied Conversational AI Agents in Extended Reality}

}

Abstract:

We present CECA, a configurable framework for embodied conversational AI agents in Unity-based extended reality (XR) applications. CECA employs a client–server architecture to decouple agent logic from game engine–based embodiment. Built on LiveKit Agents, our approach integrates speech-to-text (STT), large language models (LLMs), and text-to-speech (TTS) into a unified, streaming voice-to-voice pipeline configured via metadata rather than code changes. We outline how this architecture flexibly integrates local and cloud AI providers while mitigating limited provider SDK support in Unity. Finally, we highlight opportunities for future work, including multi-agent scenarios, higher-level templates for XR research, and systematic user studies.

Smi Hinterreiter, Martin Wessel, Fabian Schliski, Isao Echizen, Marc Erich Latoschik, Timo Spinde,

NewsUnfold: Creating a News-Reading Application That Indicates Linguistic Media Bias and Collects Feedback, In Proceedings of the International AAAI Conference on Web and Social Media, Vol. 19.

2025.

[BibTeX] [Abstract] [Download] [BibSonomy]

[BibTeX] [Abstract] [Download] [BibSonomy]

@article{hinterreiter2025newsunfold,

author = {Smi Hinterreiter and Martin Wessel and Fabian Schliski and Isao Echizen and Marc Erich Latoschik and Timo Spinde},

journal = {Proceedings of the International AAAI Conference on Web and Social Media},

url = {https://ojs.aaai.org/index.php/ICWSM/article/view/35847},

year = {2025},

volume = {19},

title = {NewsUnfold: Creating a News-Reading Application That Indicates Linguistic Media Bias and Collects Feedback}

}

Abstract:

Media bias is a multifaceted problem, leading to one-sided views and impacting decision-making. A way to address digital media bias is to detect and indicate it automatically through machine-learning methods. However, such detection is limited due to the difficulty of obtaining reliable training data. Human-in-the-loop-based feedback mechanisms have proven an effective way to facilitate the data-gathering process. Therefore, we introduce and test feedback mechanisms for the media bias domain, which we then implement on NewsUnfold, a news-reading web application to collect reader feedback on machine-generated bias highlights within online news articles. Our approach augments dataset quality by significantly increasing inter-annotator agreement by 26.31% and improving classifier performance by 2.49%. As the first human-in-the-loop application for media bias, the feedback mechanism shows that a user-centric approach to media bias data collection can return reliable data while being scalable and evaluated as easy to use. NewsUnfold demonstrates that feedback mechanisms are a promising strategy to reduce data collection expenses and continuously update datasets to changes in context.

Peter Kullmann, Theresa Schell, Timo Menzel, Mario Botsch, Marc Erich Latoschik,

Coverage of Facial Expressions and Its Effects on Avatar Embodiment, Self-Identification, and Uncanniness, In IEEE Transactions on Visualization and Computer Graphics, Vol. 31(5), pp. 3613-3622.

2025.

[BibTeX] [Abstract] [Download] [BibSonomy] [Doi]

[BibTeX] [Abstract] [Download] [BibSonomy] [Doi]

@article{kullmann2025coverage,

author = {Peter Kullmann and Theresa Schell and Timo Menzel and Mario Botsch and Marc Erich Latoschik},

journal = {IEEE Transactions on Visualization and Computer Graphics},

number = {5},

url = {https://ieeexplore.ieee.org/document/10919002},

year = {2025},

pages = {3613-3622},

volume = {31},

doi = {10.1109/TVCG.2025.3549887},

title = {Coverage of Facial Expressions and Its Effects on Avatar Embodiment, Self-Identification, and Uncanniness}

}

Abstract:

Facial expressions are crucial for many eXtended Reality (XR) use cases, from mirrored self exposures to social XR, where users interact via their avatars as digital alter egos. However, current XR devices differ in sensor coverage of the face region. Hence, a faithful reconstruction of facial expressions either has to exclude these areas or synthesize missing animation data with model-based approaches, potentially leading to perceivable mismatches between executed and perceived expression. This paper investigates potential effects of the coverage of facial animations (none, partial, or whole) on important factors of self-perception. We exposed 83 participants to their mirrored personalized avatar. They were shown their mirrored avatar face with upper and lower face animation, upper face animation only, lower face animation only, or no face animation. Whole animations were rated higher in virtual embodiment and slightly lower in uncanniness. Missing animations did not differ from partial ones in terms of virtual embodiment. Contrasts showed significantly lower humanness, lower eeriness, and lower attractiveness for the partial conditions. For questions related to self-identification, effects were mixed. We discuss participants' shift in body part attention across conditions. Qualitative results show participants perceived their virtual representation as fascinating yet uncanny.